Despite their name, neural networks are only distantly related to the sorts of things you'd find in a brain. While their organization and the way they transfer data through layers of processing may share some rough similarities to networks of actual neurons, the data and the computations performed on it would look very familiar to a standard CPU.

But neural networks aren't the only way that people have tried to take lessons from the nervous system. There's a separate discipline called neuromorphic computing that's based on approximating the behavior of individual neurons in hardware. In neuromorphic hardware, calculations are performed by lots of small units that communicate with each other through bursts of activity called spikes and adjust their behavior based on the spikes they receive from others.

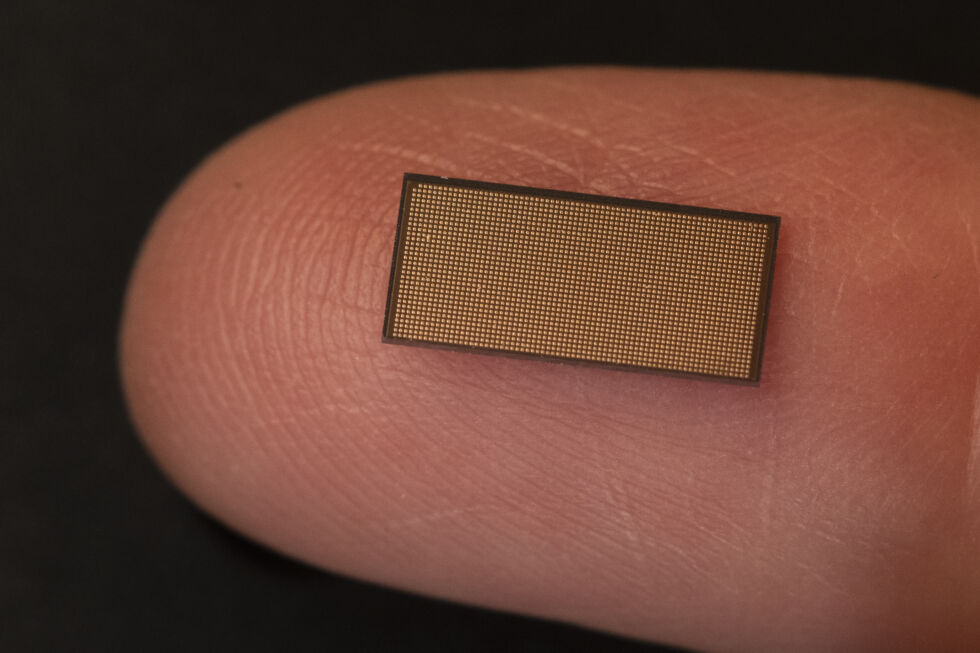

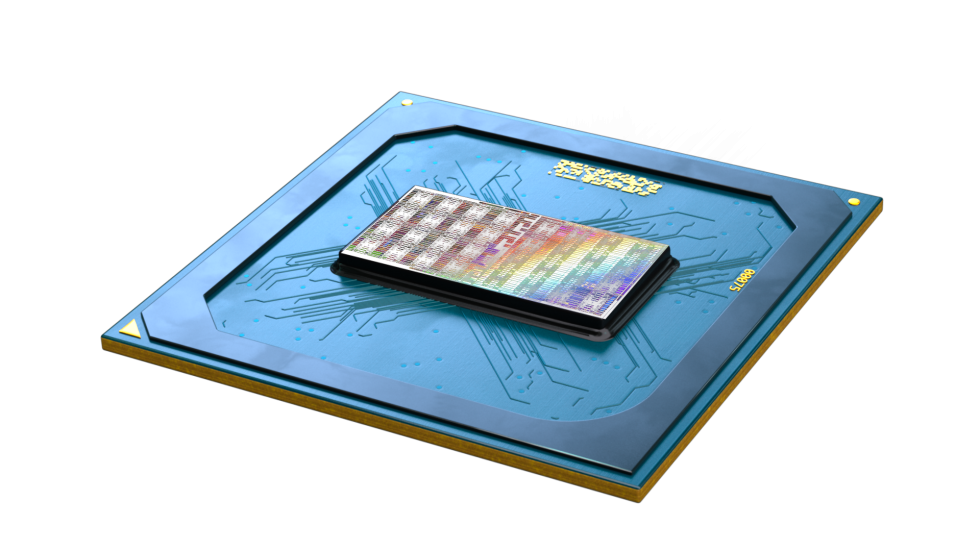

On Thursday, Intel released the newest iteration of its neuromorphic hardware, called Loihi. The new release comes with the sorts of things you'd expect from Intel: a better processor and some basic computational enhancements. But it also comes with some fundamental hardware changes that will allow it to run entirely new classes of algorithms. And while Loihi remains a research-focused product for now, Intel is also releasing a compiler that it hopes will drive wider adoption.

To make sense out of Loihi and what's new in this version, let's back up and start by looking at a bit of neurobiology, then build up from there.

From neurons to computation

The foundation of the nervous system is the cell type called a neuron. All neurons share a few common functional features. At one end of the cell is a structure called a dendrite, which you can think of as a receiver. This is where the neuron receives inputs from other cells. Nerve cells also have axons, which act as transmitters, connecting with other cells to pass along signals.

The signals take the form of what are called "spikes," which are brief changes in the voltage across the neuron's cell membrane. Spikes travel down axons until they reach the junctions with other cells (called synapses), at which point they're converted to a chemical signal that travels to the nearby dendrite. This chemical signal opens up channels that allow ions to flow into the cell, starting a new spike on the receiving cell.

The receiving cell integrates a variety of information—how many spikes it has seen, whether any neurons are signaling that it should be quiet, how active it was in the past, etc.—and uses that to determine its own activity state. Once a threshold is crossed, it'll trigger a spike down its own axons and potentially trigger activity in other cells.

Typically, this results in sporadic, randomly spaced spikes of activity when the neuron isn't receiving much input. Once it starts receiving signals, however, it'll switch to an active state and fire off a bunch of spikes in rapid succession.

How does this process encode and manipulate information? That's an interesting and important question, and one we're only just starting to answer.

One of the ways we've gone about answering it was via what has been called theoretical neurobiology (or computational neurobiology). This has involved attempts to build mathematical models that reflected the behavior of nervous systems and neurons in the hope that this would allow us to identify some underlying principles. Neural networks, which focused on the organizational principles of the nervous system, were one of the efforts that came out of this field. Spiking neural networks, which attempt to build up from the behavior of individual neurons, is another.

Spiking neural networks can be implemented in software on traditional processors. But it's also possible to implement them through hardware, as Intel is doing with Loihi. The result is a processor very much unlike anything you're likely to be familiar with.

Spiking in silicon

The previous-generation Loihi chip contains 128 individual cores connected by a communication network. Each of those cores has a large number of individual "neurons," or execution units. Each of these neurons can receive input in the form of spikes from any other neuron—a neighbor in the same core, a unit in a different core on the same chip or from another chip entirely. The neuron integrates the spikes it receives over time and, based on the behavior it's programmed with, uses that to determine when to send spikes of its own to whatever neurons it's connected with.

All of the spike signaling happens asynchronously. At set time intervals, embedded x86 cores on the same chip force a synchronization. At that point, the neuron will redo the weights of its various connections—essentially, how much attention to pay to all the individual neurons that send signals to it.

Put in terms of an actual neuron, part of the execution unit on the chip acts as a dendrite, processing incoming signals from the communication network based in part on the weight derived from past behavior. A mathematical formula was then used to determine when activity had crossed a critical threshold and to trigger spikes of its own when it does. The "axon" of the execution unit then looks up which other execution units it communicates with, and it sends a spike to each.

In the earlier iteration of Loihi, a spike simply carried a single bit of information. A neuron only registered when it received one.

Unlike a normal processor, there's no external RAM. Instead, each neuron has a small cache of memory dedicated to its use. This includes the weights it assigns to the inputs from different neurons, a cache of recent activity, and a list of all the other neurons that spikes are sent to.

One of the other big differences between neuromorphic chips and traditional processors is energy efficiency, where neuromorphic chips come out well ahead. IBM, which introduced its TrueNorth chip in 2014, was able to get useful work out of it even though it was clocked at a leisurely kiloHertz, and it used less than .0001 percent of the power that would be required to emulate a spiking neural network on traditional processors. Mike Davies, director of Intel's Neuromorphic Computing Lab, said Loihi can beat traditional processors by a factor of 2,000 on some specific workloads. "We're routinely finding 100 times [less energy] for SLAM and other robotic workloads," he added.

What’s new in neuromorphics

We'll get back to how asynchronous electronic spikes can actually solve useful problems in a bit. First, we'll take a look at what has changed between Loihi (which we'll call "the original processor" for clarity's sake) and Loihi 2. The difference is informative, because Intel has had hardware in the hands of the research community for a few years, and the company was able to incorporate their feedback into the design decisions. So, the differences between the two, in part, reflect what the people who actually use neuromorphic processors have found is holding them back.

Some of the changes are the obvious things you'd expect in the transition between two generations of chips. Intel's using a more up-to-date manufacturing process, and it can now fit each core in roughly half the space needed in the original processor. Rather than being able to communicate with separate chips via a two-dimensional grid of connections, Loihi 2 can do so in three dimensions, allowing a stack of processing boards to greatly increase the total number of neurons. The number of embedded processors per chip, which help coordinate all the activity, has gone from three to six, and there are eight times as many neurons per chip.

[

But there are also some differences that are specific to Loihi's needs. Intel says it has gone through and optimized all the asynchronous hardware, giving Loihi 2 double the performance when updating a neuron's state and boosting the performance of spike generation ten-fold.

Other changes are very specific to spiking neural networks. The original processor's spikes, as mentioned above, only carried a single bit of information. In Loihi 2, a spike is an integer, allowing it to carry far more information and to influence how the recipient neuron sends spikes. (This is a case where Loihi 2 might be somewhat less like the neurons it's mimicking in order to perform calculations better.)

Another major change is in the part of the processor that evaluates the neuron's state in order to determine whether to send a spike. In the original processor, users could perform a simple bit of math to make that determination. In Loihi 2, they now have access to a simplified programmable pipeline, allowing them to perform comparisons and control the flow of instructions. Intel's Davies told Ars that you can specify these programs down to the per-neuron level, meaning that two neighboring neurons could be running completely different software.

Davies also said that the way each neuron handles its internal memory is more flexible. Rather than specific aspects—like the list of neurons spikes should be sent to—having a fixed allocation, there's a pool of memory that can be divided up more dynamically.

These changes do far more than let Loihi 2 execute existing algorithms more efficiently; they actually let the chip run algorithms that were a poor fit for the original processor.

And that brings us back to the question of how neuromorphic computing gets anything done.

From spikes to solutions

How do you actually solve problems using something like a Loihi chip? You can make some parallels to quantum computing. There, the problem you want to solve gets converted into a combination of how you configure a set of qubits and the manipulations you perform on them. The rules of the system—the physics, in the case of quantum computing—then determine the final state of the system. That final state can then be read out and translated into a solution.

For neuromorphic computing, the problem is set up by configuring the axons, which determine what neurons signal to what targets, as well as the code that determines when a neuron sends spikes. From there, the rules of the system determine how the spiking behavior evolves, either from the initial state or in response to further input. The solution can then be read out by examining the spiking behavior of different neurons. "Computation emerges from the interactions of the neurons," is how Davies put it.

Intel provided a concrete example of this in a paper it published back in 2018. The example problem it used is finding a set of features that can be used to approximate the content of an image, in the same way that a series of circles can approximate the head of Mickey Mouse. This can be done on Loihi by assigning each neuron a feature that it represents and then having its spiking activity influenced by whether it recognizes that feature in an image. As things proceed, the neurons signal to each other in a way that tones down the activity of anything that isn't recognizing a feature.

The end result of this competition is that the neurons that represent features present in the image will be actively spiking, while those that don't are relatively quiet. This can be read out as a feature list and the process started over again by feeding the system a new image. While it might be faster to reset the whole processor to its initial state before showing a second image, it shouldn't be necessary—the system is dynamic, so changing the input will mean changing the spiking behavior, allowing a new population of neurons to gradually assert itself.

Learning on the fly and more

This dynamic behavior makes for a contrast with trained neural networks, which are very good at recognizing what they've been trained on but not flexible enough to recognize something they weren't trained on. Davies described work they've done with Loihi to recognize gestures based on video input. He said that it's possible to get the system to recognize new gestures, training it on the fly without altering its ability to recognize gestures it was previously trained on. (This training won't tie the gesture to a specific action; the Loihi system just does the recognition and relies on other hardware to take actions based on that recognition.)

Davies says these sorts of abilities have a lot of potential applications in robotics. Mobile robots have to be flexible enough to recognize and adjust to new circumstances when they find themselves facing a new environment. And any robot will see its behavior change as its parts wear down or get dirty, meaning their control systems have to adjust to new performance parameters.

Those are the sorts of things that are traditionally associated with AI systems (whether they involve spiking neurons or not). But Davies also said that there are some very different use cases where spiking systems also perform well. One he mentioned was quadratic optimizations, which help with things like managing complex scheduling constraints (think of a nationwide rail system).

These can be solved using traditional computers, but the processing resources rise rapidly with the number of constraints. Loihi has shown promising results on finding optimized solutions with a fraction of the computational resources, and Davies said it's flexible enough to be configured to either find the optimal solution or more quickly find a solution that's within 1 percent of the best.

(Intriguingly, these are the same types of problems that run well on D-Wave's quantum annealing hardware. Davies said that Los Alamos was preparing a paper comparing the two.)

Waiting for the software

While spiking neural networks can be very effective at solving these sorts of problems, the challenge has often been finding the people who understand how to use them. It is a very different type of programming and requires an equally different way of thinking about algorithm development. Davies said that most of the people who are currently adept in it come from a theoretical neurobiology background (or are still in the field). So far, this has meant that Intel has mostly pushed Loihi into the research community, something that has limited its ability to sell the processor more widely.

But long term, Intel hopes to see Loihi derivatives end up in a broad range of systems, from acting as a co-processor in embedded systems to large Loihi clusters in the data center. For that, however, it will need to be easy for companies to find people who can program for it.

To that end, Intel is coupling the release of Loihi 2 with the release of an open source software framework called Lava. "LAVA is meant to help get neuromorphic [programming] to spread to the wider computer science community," Davies told Ars. He went on to say that, in the past, Intel hasn't provided enough abstraction from the inner workings of Loihi. If you wanted to run software on it, you had to understand spiking systems in detail. LAVA is a way of giving people the ability to work with Loihi-based systems without having to know the details.

LAVA should be accessible today, and the first Loihi 2 boards will be made available to the research community via a cloud service shortly. A board with a single chip is being made available for evaluation purposes, and it will be followed by an eight-chip system called Kapoho point later this year.

Listing image by Aurich Lawson | Getty Images | Intel

https://ift.tt/3iixDfh

Bagikan Berita Ini

0 Response to "Intel launches its next-generation neuromorphic processor—so, what’s that again? - Ars Technica"

Post a Comment